New Study: How Students Can Develop AI Literacies Through Self-Regulated Learning

Developing AI Literacies Through Self-Regulated Learning: A Human-Centered Approach

How can we ensure that generative AI tools enhance rather than replace student learning? Our recent study in Computers & Education: Artificial Intelligence offers evidence that the answer lies in combining comprehensive AI literacy development with self-regulated learning processes.

Anders, A., & Dux Speltz, E. (2025). Developing generative AI literacies through self-regulated learning: A human-centered approach. Computers & Education: Artificial Intelligence. 9, 100482. https://doi.org/10.1016/j.caeai.2025.100482

We investigated this question through a semester-long “Artificial Intelligence and Writing” course with 38 undergraduate students from 22 different majors. The course design integrated three dimensions of AI literacy—functional, critical/ethical, and creative—with a Plan, Iterate, Evaluate cycle that keeps students in control of their learning process.

The Learning Design

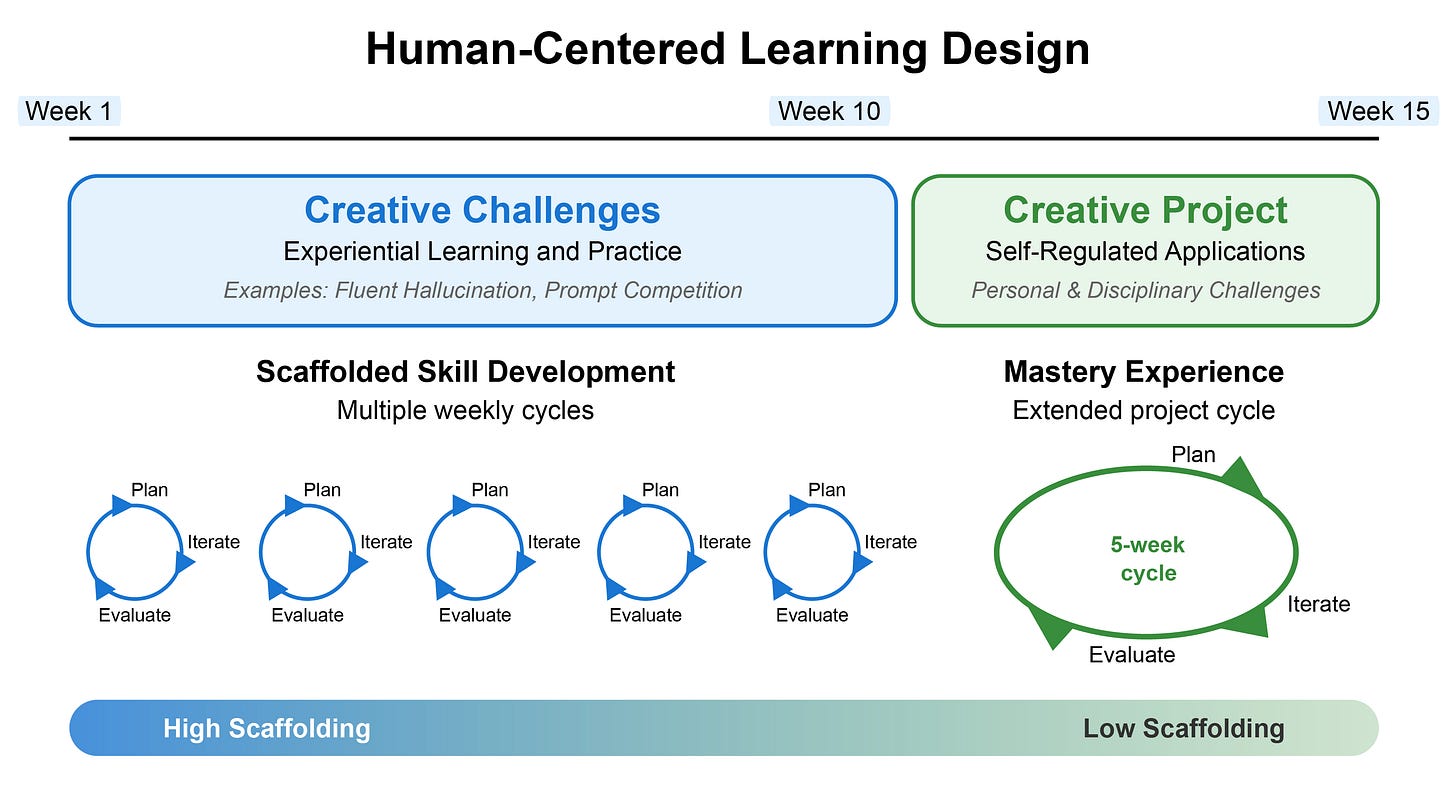

Our approach unfolds in two phases. First, students complete weekly Creative Challenges—structured activities that build specific AI competencies while practicing the complete Plan, Iterate, Evaluate cycle. These challenges progressively develop skills from understanding AI limitations to crafting effective prompts to evaluating outputs critically.

After ten weeks of scaffolded practice, students undertake self-directed Creative Projects addressing real challenges from their personal or academic interests. Projects ranged from interactive fiction with 85,000 words of branching storylines to agricultural decision-support tools to mock interview chatbots. This progression from high scaffolding to low scaffolding ensures students develop both foundational competencies and the ability to apply them independently.

Key Findings for Researchers

For the research community, we offer two primary contributions. First, we developed a validated self-efficacy measure for AI literacies (α = .94-.96) that captures functional, critical/ethical, and creative dimensions alongside situated disciplinary applications. This instrument enables assessment of how students develop confidence across multiple facets of AI use.

Second, our analysis revealed a taxonomy of human-in-the-loop practices showing how students integrate AI literacies with self-regulated learning. These patterns combined domain knowledge activation, iterative refinement strategies, and reflective evaluation—all while maintaining human oversight and creative direction.

Practical Insights for Educators

The study reveals three essential dimensions of AI literacy that students need to develop together:

Functional literacies involve understanding how AI tools work, selecting appropriate tools for specific tasks, and developing effective interaction strategies. Students progressed from basic prompt writing to sophisticated multi-tool workflows.

Critical and ethical literacies enable students to evaluate AI outputs, identify limitations and biases, and make principled decisions about appropriate use. Students learned to verify information, detect hallucinations, and establish boundaries for AI assistance.

Creative literacies empower students to leverage AI for innovation while maintaining their own vision and agency. Students discovered how to use AI as a thinking partner for brainstorming, iteration, and problem-solving without surrendering creative control.

The Plan, Iterate, Evaluate Cycle

Students who successfully developed these literacies followed a consistent pattern:

Plan: Activate domain knowledge to identify AI applications. Establish clear objectives and evaluation criteria. Connect projects to personal interests or professional goals. Students learned that effective AI use begins with human expertise and clear intentions.

Grounding projects in domain knowledge and real needs: “Farmers make better decisions [but face] a ton of information, a ton of products.”

Connecting to professional goals: “[People] want their dream job but feel like their qualifications or job experience isn’t enough.”

Iterate: Develop customized workflows matching tool capabilities to task requirements. Refine prompts through systematic experimentation. Monitor outputs for quality and relevance. Integration isn’t a one-time event but an ongoing dialogue requiring patience and strategic adaptation.

Building multi-tool workflows: “You start with your script … then from there you go into a prompt that divides your story and creates visuals … put those different visual descriptions into [an] image generator … [then] put it all into one editing software.”

Systematic refinement: “I went through five different iterations of it, and each time I made a new iteration, it would fix a few bugs or issues and add a few more features.”

Evaluate: Assess both products and processes. Identify successful strategies for future use. Reflect on how AI collaboration shaped the work. Students discovered that evaluation reveals not just what AI can do, but how it changes their own capabilities and approaches.

Measuring tangible outcomes: “Over the course of two weeks, I posted 11 different videos, and in total I generated 200,000 views.”

Assessing process impacts: “What used to take me hours was now taking me minutes to do with just AI.”

Moving Forward

Perhaps most significantly, students transformed from initial apprehension—with many considering AI use “unethical” for learning—to principled engagement. They developed what one student called “a better sense of my skills as a writer, knowing what I could write and what I couldn’t.” This self-awareness represents the human-centered approach we need: AI as a tool that reveals and amplifies human capabilities rather than replacing them.

The persistent challenges students encountered—unpredictable outputs, exhaustive refinement cycles, gaps between ambition and expertise—remind us that effective AI collaboration requires tolerance for ambiguity and continuous adaptation. There’s no perfect prompt or workflow, only ongoing learning.

For educators, this suggests moving beyond technical tutorials or ethical warnings toward sustained experiential learning. Students need opportunities to develop AI literacies through meaningful work in their own disciplines. The specific projects matter less than the process of learning to maintain agency while leveraging AI capabilities.

As generative AI continues evolving, our role as educators isn’t to provide definitive answers but to learn alongside our students. By combining comprehensive literacy development with self-regulated learning processes, we can help students become thoughtful, capable, and creative AI collaborators—ready not just to adapt to technological change, but to shape it.

The full article, “Developing generative AI literacies through self-regulated learning: A human-centered approach,” is available in Computers & Education: Artificial Intelligence.